目录

- 1. 场景

- 2. 解决

- 3. 总结

- 4. 参考

1. 场景

使用windows, wsl2 进行日常开发测试工作。 但是wsl2经常会遇到网络问题。比如今天在测试一个项目,核心功能是将postgres 的数据使用开源组件synch 同步到clickhouse 这个工作。

测试所需组件

- postgres

- kafka

- zookeeper

- redis

- synch容器

最开始测试时,选择的方案是, 将上述五个服务使用 docker-compose 进行编排, network_modules使用hosts模式, 因为考虑到kafka的监听安全机制,这种网络模式,无需单独指定暴露端口。

docker-compose.yaml 文件如下

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88 |

version: "3"

services:

postgres:

image: failymao/postgres:12.7

container_name: postgres

restart: unless-stopped

privileged: true # 设置docker-compose env 文件

command: [ "-c", "config_file=/var/lib/postgresql/postgresql.conf", "-c", "hba_file=/var/lib/postgresql/pg_hba.conf" ]

volumes:

- ./config/postgresql.conf:/var/lib/postgresql/postgresql.conf

- ./config/pg_hba.conf:/var/lib/postgresql/pg_hba.conf

environment:

POSTGRES_PASSWORD: abc123

POSTGRES_USER: postgres

POSTGRES_PORT: 15432

POSTGRES_HOST: 127.0.0.1

healthcheck:

test: sh -c "sleep 5 && PGPASSWORD=abc123 psql -h 127.0.0.1 -U postgres -p 15432 -c '\\q';"

interval: 30s

timeout: 10s

retries: 3

network_mode: "host"

zookeeper:

image: failymao/zookeeper:1.4.0

container_name: zookeeper

restart: always

network_mode: "host"

kafka:

image: failymao/kafka:1.4.0

container_name: kafka

restart: always

depends_on:

- zookeeper

environment:

KAFKA_ADVERTISED_HOST_NAME: kafka

KAFKA_ZOOKEEPER_CONNECT: localhost:2181

KAFKA_LISTENERS: PLAINTEXT://127.0.0.1:9092

KAFKA_ADVERTISED_LISTENERS: PLAINTEXT://127.0.0.1:9092

KAFKA_BROKER_ID: 1

KAFKA_LOG_RETENTION_HOURS: 24

KAFKA_LOG_DIRS: /data/kafka-data #数据挂载

network_mode: "host"

producer:

depends_on:

- redis

- kafka

- zookeeper

image: long2ice/synch

container_name: producer

command: sh -c "

sleep 30 &&

synch --alias pg2ch_test produce"

volumes:

- ./synch.yaml:/synch/synch.yaml

network_mode: "host"

# 一个消费者消费一个数据库

consumer:

tty: true

depends_on:

- redis

- kafka

- zookeeper

image: long2ice/synch

container_name: consumer

command: sh -c

"sleep 30 &&

synch --alias pg2ch_test consume --schema pg2ch_test"

volumes:

- ./synch.yaml:/synch/synch.yaml

network_mode: "host"

redis:

hostname: redis

container_name: redis

image: redis:latest

volumes:

- redis:/data

network_mode: "host"

volumes:

redis:

kafka:

zookeeper: |

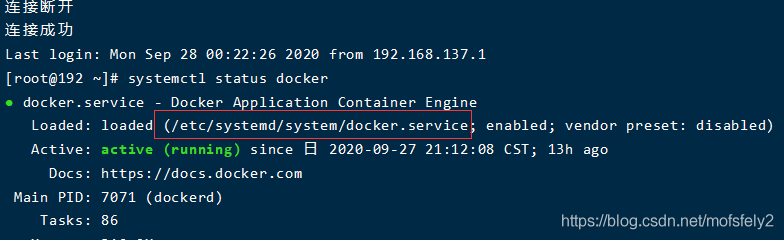

测试过程中因为要使用 postgres, wal2json组件,在容器里单独安装组件很麻烦, 尝试了几次均已失败而告终,所以后来选择了将 postgres 服务安装在宿主机上, 容器里面的synch服务 使用宿主机的 ip,port端口。

但是当重新启动服务后,synch服务一直启动不起来, 日志显示 postgres 无法连接. synch配置文件如下

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52 |

core:

debug: true # when set True, will display sql information.

insert_num: 20000 # how many num to submit,recommend set 20000 when production

insert_interval: 60 # how many seconds to submit,recommend set 60 when production

# enable this will auto create database `synch` in ClickHouse and insert monitor data

monitoring: true

redis:

host: redis

port: 6379

db: 0

password:

prefix: synch

sentinel: false # enable redis sentinel

sentinel_hosts: # redis sentinel hosts

- 127.0.0.1:5000

sentinel_master: master

queue_max_len: 200000 # stream max len, will delete redundant ones with FIFO

source_dbs:

- db_type: postgres

alias: pg2ch_test

broker_type: kafka # current support redis and kafka

host: 127.0.0.1

port: 5433

user: postgres

password: abc123

databases:

- database: pg2ch_test

auto_create: true

tables:

- table: pgbench_accounts

auto_full_etl: true

clickhouse_engine: CollapsingMergeTree

sign_column: sign

version_column:

partition_by:

settings:

clickhouse:

# shard hosts when cluster, will insert by random

hosts:

- 127.0.0.1:9000

user: default

password: ''

cluster_name: # enable cluster mode when not empty, and hosts must be more than one if enable.

distributed_suffix: _all # distributed tables suffix, available in cluster

kafka:

servers:

- 127.0.0.1:9092

topic_prefix: synch |

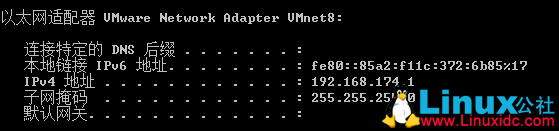

这种情况很奇怪,首先确认 postgres, 启动,且监听端口(此处是5433) 也正常,使用localhost和主机eth0网卡地址均报错。

2. 解决

google 答案,参考 stackoverflow 高赞回答,问题解决,原答案如下

If you are using Docker-for-mac or Docker-for-Windows 18.03+, just connect to your mysql service using the host host.docker.internal (instead of the 127.0.0.1 in your connection string).

If you are using Docker-for-Linux 20.10.0+, you can also use the host host.docker.internal if you started your Docker

container with the –add-host host.docker.internal:host-gateway option.

Otherwise, read below

Use** –network="host" **in your docker run command, then 127.0.0.1 in your docker container will point to your docker host.

更多详情见 源贴

host 模式下 容器内服务访问宿主机服务

将postgres监听地址修改如下 host.docker.internal 报错解决。 查看宿主机 /etc/hosts 文件如下

|

1

2

3

4

5

6

7 |

root@failymao-NC:/mnt/d/pythonProject/pg_2_ch_demo# cat /etc/hosts

# This file was automatically generated by WSL. To stop automatic generation of this file, add the following entry to /etc/wsl.conf:

# [network]

# generateHosts = false

127.0.0.1 localhost

10.111.130.24 host.docker.internal |

可以看到,宿主机 ip跟域名的映射. 通过访问域名,解析到宿主机ip, 访问宿主机服务。

最终启动 synch 服务配置如下

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104 |

core:

debug: true # when set True, will display sql information.

insert_num: 20000 # how many num to submit,recommend set 20000 when production

insert_interval: 60 # how many seconds to submit,recommend set 60 when production

# enable this will auto create database `synch` in ClickHouse and insert monitor data

monitoring: true

redis:

host: redis

port: 6379

db: 0

password:

prefix: synch

sentinel: false # enable redis sentinel

sentinel_hosts: # redis sentinel hosts

- 127.0.0.1:5000

sentinel_master: master

queue_max_len: 200000 # stream max len, will delete redundant ones with FIFO

source_dbs:

- db_type: postgres

alias: pg2ch_test

broker_type: kafka # current support redis and kafka

host: host.docker.internal

port: 5433

user: postgres

password: abc123

databases:

- database: pg2ch_test

auto_create: true

tables:

- table: pgbench_accounts

auto_full_etl: true

clickhouse_engine: CollapsingMergeTree

sign_column: sign

version_column:

partition_by:

settings:

clickhouse:

# shard hosts when cluster, will insert by random

hosts:

- 127.0.0.1:9000

user: default

password: ''

cluster_name: # enable cluster mode when not empty, and hosts must be more than one if enable.

distributed_suffix: _all # distributed tables suffix, available in cluster

kafka:

servers:

- 127.0.0.1:9092

topic_prefix: synch host: host.docker.internal

core:

debug: true # when set True, will display sql information.

insert_num: 20000 # how many num to submit,recommend set 20000 when production

insert_interval: 60 # how many seconds to submit,recommend set 60 when production

# enable this will auto create database `synch` in ClickHouse and insert monitor data

monitoring: true

redis:

host: redis

port: 6379

db: 0

password:

prefix: synch

sentinel: false # enable redis sentinel

sentinel_hosts: # redis sentinel hosts

- 127.0.0.1:5000

sentinel_master: master

queue_max_len: 200000 # stream max len, will delete redundant ones with FIFO

source_dbs:

- db_type: postgres

alias: pg2ch_test

broker_type: kafka # current support redis and kafka

host:

port: 5433

user: postgres

password: abc123

databases:

- database: pg2ch_test

auto_create: true

tables:

- table: pgbench_accounts

auto_full_etl: true

clickhouse_engine: CollapsingMergeTree

sign_column: sign

version_column:

partition_by:

settings:

clickhouse:

# shard hosts when cluster, will insert by random

hosts:

- 127.0.0.1:9000

user: default

password: ''

cluster_name: # enable cluster mode when not empty, and hosts must be more than one if enable.

distributed_suffix: _all # distributed tables suffix, available in cluster

kafka:

servers:

- 127.0.0.1:9092

topic_prefix: synch |

3. 总结

以–networks="host" 模式下启动容器时,如果想在容器内访问宿主机上的服务, 将ip修改为`host.docker.internal`

4. 参考

https://stackoverflow.com/questions/24319662/from-inside-of-a-docker-container-how-do-i-connect-to-the-localhost-of-the-mach

到此这篇关于docker内服务访问宿主机服务的实现的文章就介绍到这了,更多相关docker访问宿主机内容请搜索快网idc以前的文章或继续浏览下面的相关文章希望大家以后多多支持快网idc!

原文链接:https://www.cnblogs.com/failymao/p/15431490.html

相关文章

- 利用FTP和计划任务自动备份网站数据和数据库 2025-05-27

- 服务器技术之硬件冗余技术 2025-05-27

- 服务器是租用还是服务器托管好? 2025-05-27

- 什么是DNS以及它如何影响服务器? 2025-05-27

- 刀片服务器与机架服务器的区别介绍 2025-05-27

- 2025-07-10 怎样使用阿里云的安全工具进行服务器漏洞扫描和修复?

- 2025-07-10 怎样使用命令行工具优化Linux云服务器的Ping性能?

- 2025-07-10 怎样使用Xshell连接华为云服务器,实现高效远程管理?

- 2025-07-10 怎样利用云服务器D盘搭建稳定、高效的网站托管环境?

- 2025-07-10 怎样使用阿里云的安全组功能来增强服务器防火墙的安全性?

快网idc优惠网

QQ交流群

-

2025-05-26 81

-

2025-05-25 98

-

VMware下Ubuntu 14.04静态IP地址的设置方法

2025-05-27 61 -

2025-05-26 26

-

2025-05-27 34